A Dummies Guide to AI & the Technology Behind It.

A Dummies Guide to AI & the Technology Behind It.

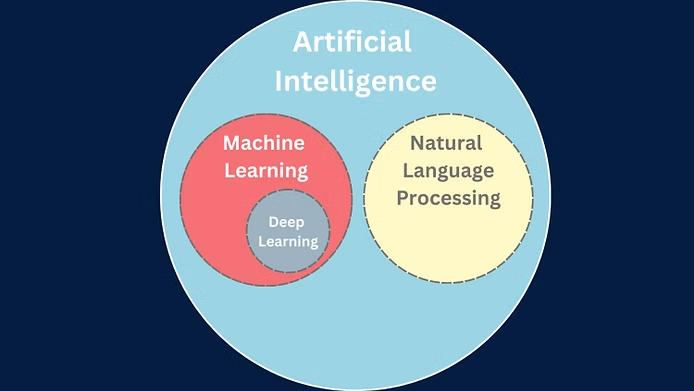

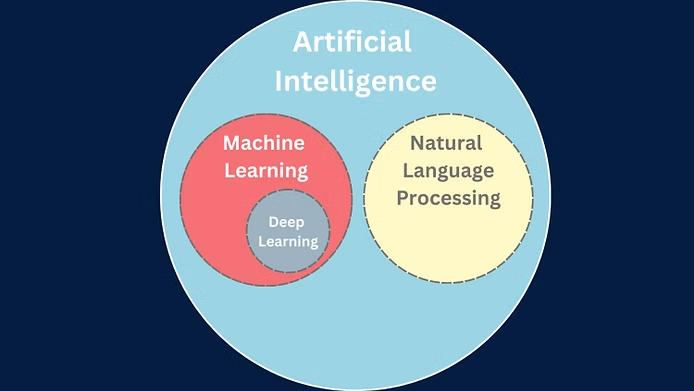

Artificial Intelligence or AI is an umbrella term for technologies that simulate human intelligence. In this article, we will delve into the basics of AI and all the jargon surrounding it, with the aim of providing a comprehensive understanding of these interconnected technologies. Our aim is to empower readers with knowledge that will help them embrace, rather than fear, the remarkable capabilities of AI and its applications.

At the core of AI lies a complex interplay of technologies that work together to enable machines to learn, reason, and respond to their environment. Three key technologies underpinning AI are machine learning, deep learning, and natural language processing.

What is Machine Learning?

Machine learning is a subfield of AI that trains algorithms to make predictions or decisions based on data. By developing algorithms and models that can learn from and make decisions without explicit programming, machine learning aims to create systems that automatically adapt and improve their performance as they process more data. Machine learning has a wide range of applications, including image and speech recognition, natural language processing, medical diagnosis, financial analysis, and recommendation systems.

What is Deep Learning?

Deep learning, a subset of machine learning, employs artificial neural networks (ANN) to comprehend complex patterns and relationships within data, emulating the human brain's functionality. Deep learning algorithms consist of multiple layers of interconnected nodes, which enables them to process vast amounts of data and make highly accurate predictions. These algorithms are responsible for groundbreaking advancements in areas like image recognition, natural language understanding, and game-playing AI

What is Natural Language Processing?

Natural Language Processing (NLP) allows machines to understand, interpret, and generate human-like language. NLP techniques break down spoken or written content into words, phrases, and structured data, which can be analyzed and acted upon. As machine learning algorithms are exposed to more data, they become more accurate and effective, enhancing NLP capabilities in areas like sentiment analysis, text summarization, and machine translation.

What are Large Language Models?

Large Language Models (LLMs) are deep learning algorithms capable of recognizing, summarizing, translating, predicting, and generating text and other content based on knowledge derived from massive datasets. LLMs, such as ChatGPT, can generate human-like text by understanding context and patterns in language. These models have revolutionized various applications, including content generation, sentiment analysis, and even code generation, by providing intelligent, context-aware suggestions and predictions.

In conclusion, AI, machine learning, deep learning, and natural language processing are powerful technologies that have the potential to revolutionize the way we live and work. Instead of fearing these advancements, we should embrace them and harness their capabilities to improve our lives. By understanding the basics of these concepts, we can better appreciate their potential to drive innovation and solve complex problems. As we continue to develop and refine AI, it is crucial to remain mindful of its ethical implications while fostering an environment where we can utilize these technologies responsibly for the betterment of society.

Also, read our F.A.Q section where we answer the most commonly asked questions related to Artificial Intelligence.Also if you would like to learn more about Open AI and how it came about then we have that covered too.